AI adoption has moved from experimental to essential. 78% companies now use AI to some level, and that is a jump from just 55% in 2023. But the speed of adoption has outpaced responsibility. McKinsey’s own research reveals that only 17% of C-suite leaders who benchmark their AI systems prioritize fairness, bias, transparency, and regulatory compliance. The rest of the businesses have become blind when it comes to ethics.

The consequences of that gap can not be ignored anymore. Biased algorithms, opaque decision-making, and data privacy failures can no longer be overlooked. They are major business risks that are eroding consumer trust and attracting regulatory action across every major market.

So what should businesses focus on moving forward? Ethical AI frameworks are the answer to this moment. They should not consider them as compliance tasks but a great opportunity to build trust among their customers, manage risks, and ensure overall long-term success. Here are the top ones every business should be adopting right now.

What is Ethical AI?

Ethical AI is about designing, developing, and deploying artificial intelligence in a fair, transparent, accountable manner, along with being respectful of human rights. There is no single rule or regulation for it. It is a commitment to ensure that AI works for people, not against them.

The need for ethical AI is urgent. Facial recognition systems misclassify women and people of color at rates disproportionately higher than others. Healthcare AI models, on the other hand, are continuously failing minorities. Air Canada covered the cost for a customer that its chatbot had misled. These examples are just some of the consequences when AI works without ethical constraints.

Ethical AI addresses four recurring risk areas:

- Bias – unfair discrimination against individuals or groups

- Explainability – decisions that users and developers cannot understand

- Robustness – systems that fail under unexpected conditions

- Privacy – inadequate protection of personal data

Why Ethical AI Frameworks Matters?

Now that you are familiar with what is AI ethics, it is time to know why they matter so much. Take healthcare, marketing, or customer service, AI is making a lot of important decisions that can directly affect people. And as adoption is increasing, risks also increase. And in 2026, the cost of ignoring it has never been higher.

When AI Goes Wrong, Businesses Pay the Price

Unaccountable AI leads to legal liability, kills customer confidence, and kills growth. Amazon’s internal resume screening tool had to be thrown out altogether because it was proven to be inherently biased against female applicants. It was made using years of hiring data from its male-dominated employer practices. Industries like insurance, finance, healthcare, and recruitment also seem to be learning the same hard lesson that ungoverned AI is a dangerous asset.

The Regulatory Window Is Closing

The ethical AI frameworks and rules are no longer voluntary. The EU AI Act, widely described as the GDPR for AI, is going to reach its full enforcement in August 2026, with binding requirements for businesses using AI in high-risk areas like hiring, banking, and education. Non-compliance carries heavy penalties, and its reach extends beyond Europe to any business serving EU customers.

New York now legally requires independent bias audits of AI hiring tools. Colorado prohibits insurers from using discriminatory algorithms. This is the direction regulation is heading. Globally and fast.

Ethics Has Become a Competitive Advantage

Compliance is only part of the story. According to the World Economic Forum, effective AI governance is more like a strategic advantage that unlocks sustainable growth by improving customer engagement, opening new revenue streams, and ensuring AI initiatives are vetted for safety and business impact. IMD’s 2025 AI Maturity Index found that firms with publicly disclosed AI ethics principles and dedicated governance roles now feature at the top across nearly every major industry sector. The businesses pulling ahead are the ones that have the most trusted AI.

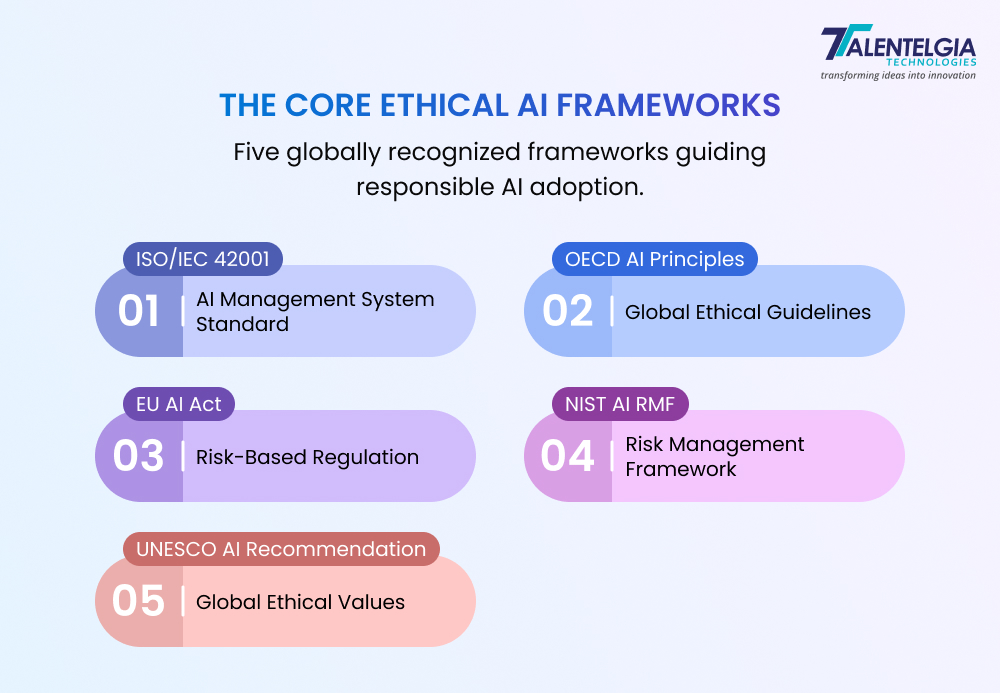

The Core Ethical AI Frameworks

ISO/IEC 42001 – AI Management System Standard

Published in December 2023

ISO 42001 is the world’s first AI management system standard, designed specifically to address the ethical and transparency challenges that come with deploying AI. If your business is already certified for information security, this is a natural and relatively straightforward next step.

What sets it apart from every other framework on this list is one word: certification. Unlike certain other frameworks that are basically guidelines, it is an independently audited standard that gives customers, regulators, and partners verifiable proof that your AI governance is real.

The standard is built on five core pillars:

- Transparency – clear communication of how AI functions and makes decisions

- Accountability – defined roles and responsibilities for AI outcomes

- Human Oversight – established thresholds for when humans must intervene

- Data Governance – responsible, high-quality data use throughout the AI lifecycle

- Continual Improvement – regular performance reviews and governance updates

| Brands that have implemented them early are already seeing the benefits. Cornerstone Galaxy is one example. It achieved ISO 42001 certification in December 2025, describing it as a direct differentiator in client trust and market positioning. |

OECD AI Principles

First established in 2019 and updated in May 2024

Most businesses jump straight into compliance tools and technical frameworks without even asking the most basic question, “What do we actually stand for when it comes to AI?” The OECD AI Principles answer exactly that.

These principles were the first intergovernmental standard on AI ever created, now endorsed by 47 jurisdictions, including the European Union, the United States, and the United Nations. OECD principles do not come as a product from one company but have been framed based on years of consultations across multiple governments, businesses, civil society, and technical experts. This is why they carry so much weight globally.

Here are its core principles for every business:

- Benefit people and the planet – AI should be beneficial for everyone, not just the stakeholders. Environmental impact, social inclusion, and economic fairness are also a part of it.

- Respect human rights and democratic values – AI must not be used to spread misinformation. It must be fair and not discriminate against anyone.

- Be transparent and explainable – If your AI makes a decision that affects someone, whether it is a loan approval, a job shortlist, or a medical recommendation, that person should be able to understand why. Just in plain, simple language, nothing technical.

- Be robust, secure, and safe – AI systems need to be completely reliable even if things go wrong. The 2024 update made this even stronger, requiring that AI systems can be overridden, repaired, or shut down if they start causing harm.

- Be accountable – Someone in your organisation must own the outcomes of your AI systems. Accountability includes having audit trails, risk management processes, and a clear chain of responsibility. Just having good intentions won’t work.

The EU AI Act

Entered into force in August 2024. It is going to be fully applicable on 2 August, 2026.

The EU AI Act is the one ethical AI framework on this list that is not optional. The European Commission is making the most critical window for businesses to get compliant right now.

It works on a four-tier risk classification system:

- Unacceptable risk – Strictly banned. This includes social scoring systems, manipulative AI, and most real-time biometric surveillance. These prohibitions have been prevalent since February 2025

- High risk – strict mandatory requirements. It covers AI used in hiring, credit scoring, healthcare, education, and law enforcement

- Limited risk – transparency obligations only. It includes disclosing when users are interacting with a chatbot

- Minimal risk – no additional requirements

The Act’s reach is just like the GDPR. Any organisation, no matter where there are located, must comply if its AI systems are used within the EU or produce outputs that affect the residents of Europe. A U.S. company running AI-powered loan approvals for European customers is also going to be in scope, fully.

Non-compliance carries fines of up to €35 million or 7% of global annual turnover. And these penalties are serious enough to threaten the viability of your business.

NIST AI Risk Management Framework (AI RMF)

Released in January 2023 by the U.S. National Institute of Standards and Technology.

What makes NIST AI RMF stand out is the flexibility it offers.

The framework runs on four interconnected functions:

- Govern – set the rules, assign clear roles, and build a risk-aware culture from the top down. It’s basically the structure on which everything is based.

- Map – identify and document all AI systems used within the organization. It also involves what risk each system carries within its specific context.

- Measure – assess those risks using both quantitative and qualitative methods, tracking them with metrics that actually reflect real-world harm

- Manage – act on what you find through mitigation, monitoring, and continuous improvement. It should not be a one-time exercise but an ongoing practice

Even though the standard is technically mandatory, it shouldn’t mislead you. Within 18 months of its release, the NIST AI RMF appeared in executive orders, state AI laws, and federal procurement requirements. The Colorado AI Act references it directly. The EU AI Act’s implementing guidance cites it.

The FTC, FDA, SEC, CFPB, and EEOC all now reference NIST AI RMF principles in their expectations for safe AI deployment. Businesses that fail to follow it are becoming more vulnerable, even without a formal rule forcing them to adopt it.

UNESCO Recommendation on the Ethics of AI

Adopted in November 2021

Its adoption was the first time all UNESCO Member States reached consensus on a global ethical framework for AI, and that is also the first time companies formally engaged with the United Nations in this space. That is not a small thing. It means the values embedded in this framework reflect genuine global agreement, not the priorities of any single government or industry.

The framework is based on four crucial values that every business using AI should take seriously:

- Human rights and dignity – AI must never undermine the fundamental rights of individuals.

- Peaceful and inclusive societies – AI should reduce inequality, not deepen it.

- Diversity and inclusiveness – AI development must reflect the full range of human experience.

- Environmental sustainability – The environmental cost of AI systems must be actively managed.

What makes this practically useful for businesses is the Ethical Impact Assessment (EIA). It is a structured tool that UNESCO developed to help organisations identify and address the real-world harms of their AI systems before and after deployment. Early adopters have seen measurable results.

Companies including Microsoft, Telefónica, Salesforce, Mastercard, and Lenovo have formally committed to implementing the Recommendation.

Also Read: Top AI Frameworks & Libraries

Practical Implementation Strategies for Ethical AI

Understanding the ethical AI frameworks is only the first step. The real challenge begins when businesses attempt to apply ethical AI practices in day-to-day operations.

Establish Leadership Ownership

Ethical AI initiatives must start at the leadership level. Senior executives should clearly establish the company’s ethical priorities, assigning appropriate resources, and maintaining accountability among all the teams. Many organizations even keep dedicated roles such as Responsible AI leads or governance officers to coordinate and ensure the implementation is done in the right manner.

Build Cross-Functional Teams

The implementation of ethical AI demands the collaboration between:

- Data scientists

- Engineers

- Legal teams

- Risk managers

- Business leaders

It is a team effort. Cross-functional teams help ensure that all sorts of risks, be it technical or legal responsibilities, are taken care of during system development. This collaborative structure reduces blind spots and improves decision quality.

Integrate Ethics into the Design Phase

Ethical AI should begin at the earliest stage of development, not after deployment.

This approach, often called AI ethics by design, includes:

- Reviewing training data for bias

- Defining acceptable system behaviors

- Identifying high-risk use cases

- Setting safety boundaries before launch

Early integration reduces costly fixes later in the development lifecycle.

Conduct Regular AI Audits

AI systems should be reviewed continuously to detect emerging risks.

Audits help identify:

- Model bias

- Performance drift

- Privacy risks

- Unexpected system behavior

Internal and independent audits strengthen accountability and improve system reliability. Regular audits also help organizations prepare for regulatory inspections and certification requirements.

Train Employees About Ethical Awareness

Making sure your technology is aligned with ethical frameworks isn’t going to help you entirely. Training people also plays a crucial role.

Organizations should provide regular training on:

- Responsible data use

- Bias awareness

- AI risk identification

- Ethical decision-making

When employees understand the ethical regulations, they are most likely to identify issues themselves and act responsibly.

Conclusion

In the future, the regulations for ethical AI will tighten, public awareness will increase, and expectations for businesses for responsible technology will exponentially increase across every industry. Businesses that are already working on embedding the ethics of artificial intelligence are the ones that will be reducing risk and will position themselves as a trusted leader in the digital economy.

Healthcare App Development Services

Healthcare App Development Services

Real Estate Web Development Services

Real Estate Web Development Services

E-Commerce App Development Services

E-Commerce App Development Services E-Commerce Web Development Services

E-Commerce Web Development Services Blockchain E-commerce Development Company

Blockchain E-commerce Development Company

Fintech App Development Services

Fintech App Development Services Fintech Web Development

Fintech Web Development Blockchain Fintech Development Company

Blockchain Fintech Development Company

E-Learning App Development Services

E-Learning App Development Services

Restaurant App Development Company

Restaurant App Development Company

Mobile Game Development Company

Mobile Game Development Company

Travel App Development Company

Travel App Development Company

Automotive Web Design

Automotive Web Design

AI Traffic Management System

AI Traffic Management System

AI Inventory Management Software

AI Inventory Management Software

AI Development Company

AI Development Company  ChatGPT integration services

ChatGPT integration services  AI Integration Services

AI Integration Services  Generative AI Development Services

Generative AI Development Services  Natural Language Processing Company

Natural Language Processing Company Machine Learning Development

Machine Learning Development  Machine learning consulting services

Machine learning consulting services  Blockchain Development

Blockchain Development  Blockchain Software Development

Blockchain Software Development  Smart Contract Development Company

Smart Contract Development Company  NFT Marketplace Development Services

NFT Marketplace Development Services  Asset Tokenization Company

Asset Tokenization Company DeFi Wallet Development Company

DeFi Wallet Development Company Mobile App Development

Mobile App Development  IOS App Development

IOS App Development  Android App Development

Android App Development  Cross-Platform App Development

Cross-Platform App Development  Augmented Reality (AR) App Development

Augmented Reality (AR) App Development  Virtual Reality (VR) App Development

Virtual Reality (VR) App Development  Web App Development

Web App Development  SaaS App Development

SaaS App Development Flutter

Flutter  React Native

React Native  Swift (IOS)

Swift (IOS)  Kotlin (Android)

Kotlin (Android)  Mean Stack Development

Mean Stack Development  AngularJS Development

AngularJS Development  MongoDB Development

MongoDB Development  Nodejs Development

Nodejs Development  Database Development

Database Development Ruby on Rails Development

Ruby on Rails Development Expressjs Development

Expressjs Development  Full Stack Development

Full Stack Development  Web Development Services

Web Development Services  Laravel Development

Laravel Development  LAMP Development

LAMP Development  Custom PHP Development

Custom PHP Development  .Net Development

.Net Development  User Experience Design Services

User Experience Design Services  User Interface Design Services

User Interface Design Services  Automated Testing

Automated Testing  Manual Testing

Manual Testing  Digital Marketing Services

Digital Marketing Services

Ride-Sharing And Taxi Services

Ride-Sharing And Taxi Services Food Delivery Services

Food Delivery Services Grocery Delivery Services

Grocery Delivery Services Transportation And Logistics

Transportation And Logistics Car Wash App

Car Wash App Home Services App

Home Services App ERP Development Services

ERP Development Services CMS Development Services

CMS Development Services LMS Development

LMS Development CRM Development

CRM Development DevOps Development Services

DevOps Development Services AI Business Solutions

AI Business Solutions AI Cloud Solutions

AI Cloud Solutions AI Chatbot Development

AI Chatbot Development API Development

API Development Blockchain Product Development

Blockchain Product Development Cryptocurrency Wallet Development

Cryptocurrency Wallet Development About Talentelgia

About Talentelgia  Our Team

Our Team  Our Culture

Our Culture

Healthcare App Development Services

Healthcare App Development Services Real Estate Web Development Services

Real Estate Web Development Services E-Commerce App Development Services

E-Commerce App Development Services E-Commerce Web Development Services

E-Commerce Web Development Services Blockchain E-commerce

Development Company

Blockchain E-commerce

Development Company Fintech App Development Services

Fintech App Development Services Finance Web Development

Finance Web Development Blockchain Fintech

Development Company

Blockchain Fintech

Development Company E-Learning App Development Services

E-Learning App Development Services Restaurant App Development Company

Restaurant App Development Company Mobile Game Development Company

Mobile Game Development Company Travel App Development Company

Travel App Development Company Automotive Web Design

Automotive Web Design AI Traffic Management System

AI Traffic Management System AI Inventory Management Software

AI Inventory Management Software AI Development Company

AI Development Company ChatGPT integration services

ChatGPT integration services AI Integration Services

AI Integration Services Machine Learning Development

Machine Learning Development Machine learning consulting services

Machine learning consulting services Blockchain Development

Blockchain Development Blockchain Software Development

Blockchain Software Development Smart contract development company

Smart contract development company NFT marketplace development services

NFT marketplace development services IOS App Development

IOS App Development Android App Development

Android App Development Cross-Platform App Development

Cross-Platform App Development Augmented Reality (AR) App

Development

Augmented Reality (AR) App

Development Virtual Reality (VR) App Development

Virtual Reality (VR) App Development Web App Development

Web App Development Flutter

Flutter React

Native

React

Native Swift

(IOS)

Swift

(IOS) Kotlin (Android)

Kotlin (Android) MEAN Stack Development

MEAN Stack Development AngularJS Development

AngularJS Development MongoDB Development

MongoDB Development Nodejs Development

Nodejs Development Database development services

Database development services Ruby on Rails Development services

Ruby on Rails Development services Expressjs Development

Expressjs Development Full Stack Development

Full Stack Development Web Development Services

Web Development Services Laravel Development

Laravel Development LAMP

Development

LAMP

Development Custom PHP Development

Custom PHP Development User Experience Design Services

User Experience Design Services User Interface Design Services

User Interface Design Services Automated Testing

Automated Testing Manual

Testing

Manual

Testing About Talentelgia

About Talentelgia Our Team

Our Team Our Culture

Our Culture

Write us on:

Write us on:  Business queries:

Business queries:  HR:

HR: